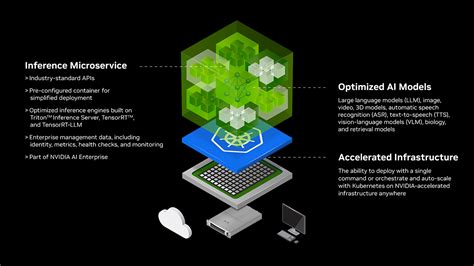

Nvidia recently unveiled a trio of innovative NIM microservices, each designed to provide enterprises with enhanced control and safety features for their AI agents. These microservices, which are small independent components integrated into larger applications, aim to address crucial aspects of AI deployment.

“By applying multiple lightweight, specialized models as guardrails, developers can cover gaps that may occur when only more general global policies and protections exist — as a one-size-fits-all approach doesn’t properly secure and control complex agentic AI workflows,”

One of the new NIM services focuses on content safety by preventing AI agents from generating harmful or biased outputs. This feature is particularly critical in ensuring that AI technologies adhere to ethical standards and avoid propagating misinformation or discriminatory content.

Another newly introduced service aims to steer conversations conducted by AI agents towards approved topics exclusively. By restricting discussions to predefined subjects, enterprises can maintain compliance with regulatory requirements while also safeguarding against potentially sensitive or inappropriate dialogues.

The third addition to Nvidia’s lineup of NIM microservices is dedicated to thwarting jailbreak attempts targeted at circumventing software limitations imposed on AI agents. Such security measures are essential for preserving the integrity and functionality of these advanced systems in high-stakes environments where data privacy and operational reliability are paramount.

Nvidia NeMo Guardrails, the umbrella framework encompassing these innovative microservices, represents the company’s commitment to empowering businesses in optimizing their AI applications effectively. Through this open-source collection of tools and services, Nvidia seeks to offer practical solutions that enable organizations to navigate the complexities associated with deploying AI technologies securely.

Expert analysts suggest that the evolving landscape of artificial intelligence necessitates a balanced approach that combines robust global policies with tailored safeguards like those provided by Nvidia’s NIM microservices. As AI adoption continues to expand across various industries, there is an increasing recognition of the need for comprehensive mechanisms that ensure responsible and reliable use of these transformative technologies.

“While enterprises are clearly interested in AI agents, they are not adopting AI tech at the same cadence as innovation is happening in the AI space.”

Despite optimistic projections regarding the widespread integration of AI agents into enterprise workflows—such as Salesforce CEO Marc Benioff’s forecast of over a billion active agents leveraging Salesforce within a year—the reality appears more nuanced. Studies indicate that while interest in adopting AI agents is evident among businesses, actual implementation remains gradual, with only a fraction currently utilizing such technologies.

A recent study by Deloitte projected a gradual uptake of AI agent technology over the coming years, estimating that approximately 25% of enterprises either already employ or anticipate using such systems by 2025. Furthermore, forecasts suggest that by 2027, half of all enterprises could be leveraging agent-based solutions in their operations—a testament to the growing importance of autonomous systems in driving business efficiencies.

In light of these trends highlighting a measured pace of adoption within corporate settings relative to technological advancements in the field, efforts like those undertaken by Nvidia play a pivotal role in streamlining the integration process for businesses considering embracing AI solutions. The emphasis on enhancing security measures through initiatives like NIM microservices underscores a broader industry shift towards fortifying trust and confidence in deploying sophisticated artificial intelligence frameworks within organizational contexts.

As Nvidia continues its campaign to promote safer adoption practices for cutting-edge technologies like AI agents through meticulous control mechanisms and protective protocols, industry observers await further developments with keen interest. The effectiveness of these strategic initiatives lies not just in bolstering security provisions but also in shaping broader perceptions around embracing transformative innovations responsibly—a journey marked by both challenges and opportunities as enterprises navigate an increasingly digitized future.

Leave feedback about this